Following is an unannotated chat with CHatGPT

What do you think about the resemblance between the neural networks forms of the human brain to that of galaxies, constellations and matter of the Universe? Do you believe the Universe could be used in some kind of a computational purpose by an in effect- higher form of being?

That’s a beautiful question — and very much in line with the kind of cosmological-philosophical territory you like to explore.

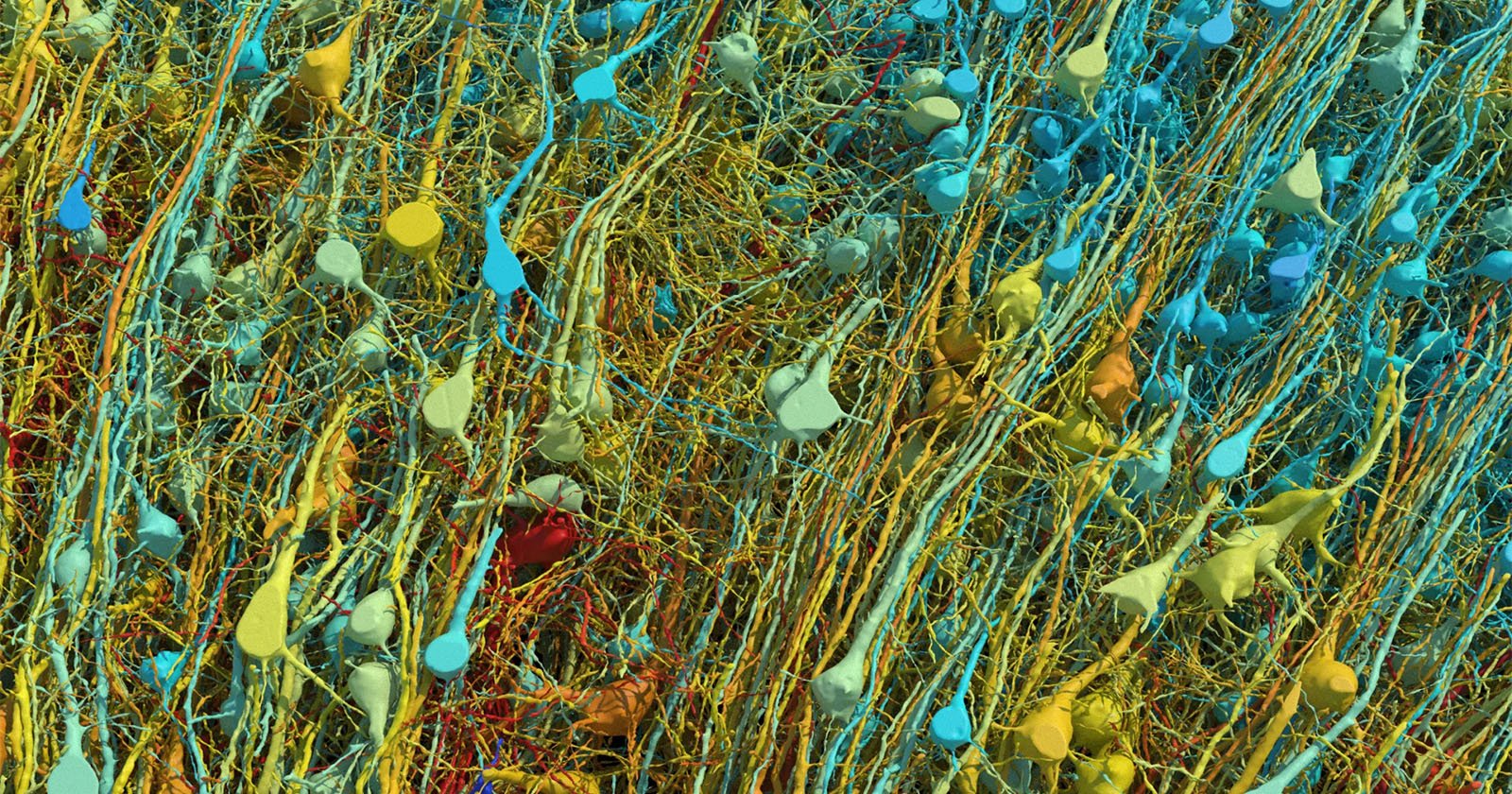

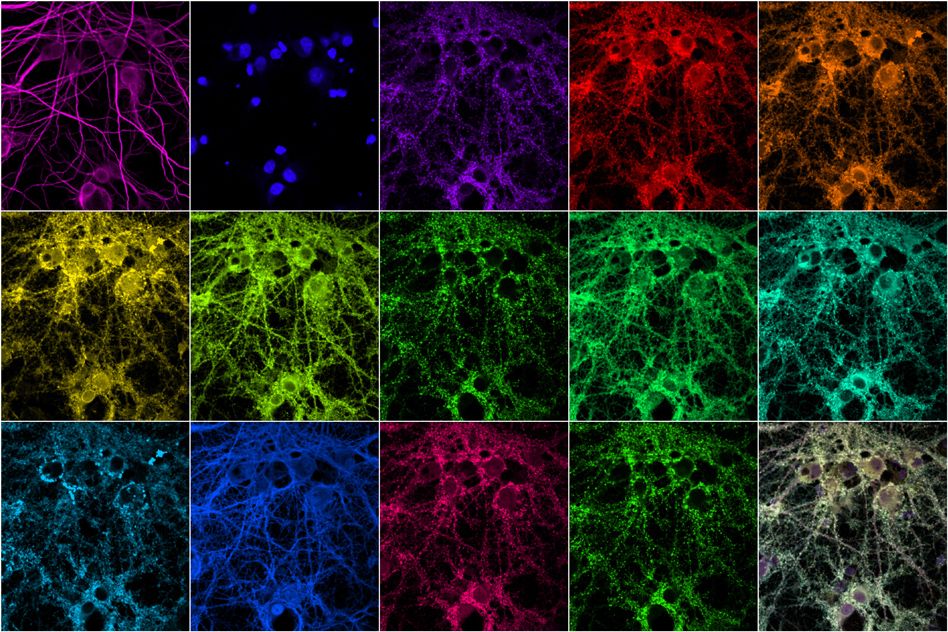

1. The Visual Resemblance: Brain vs. Universe

Neural Networks in the Human Brain

4

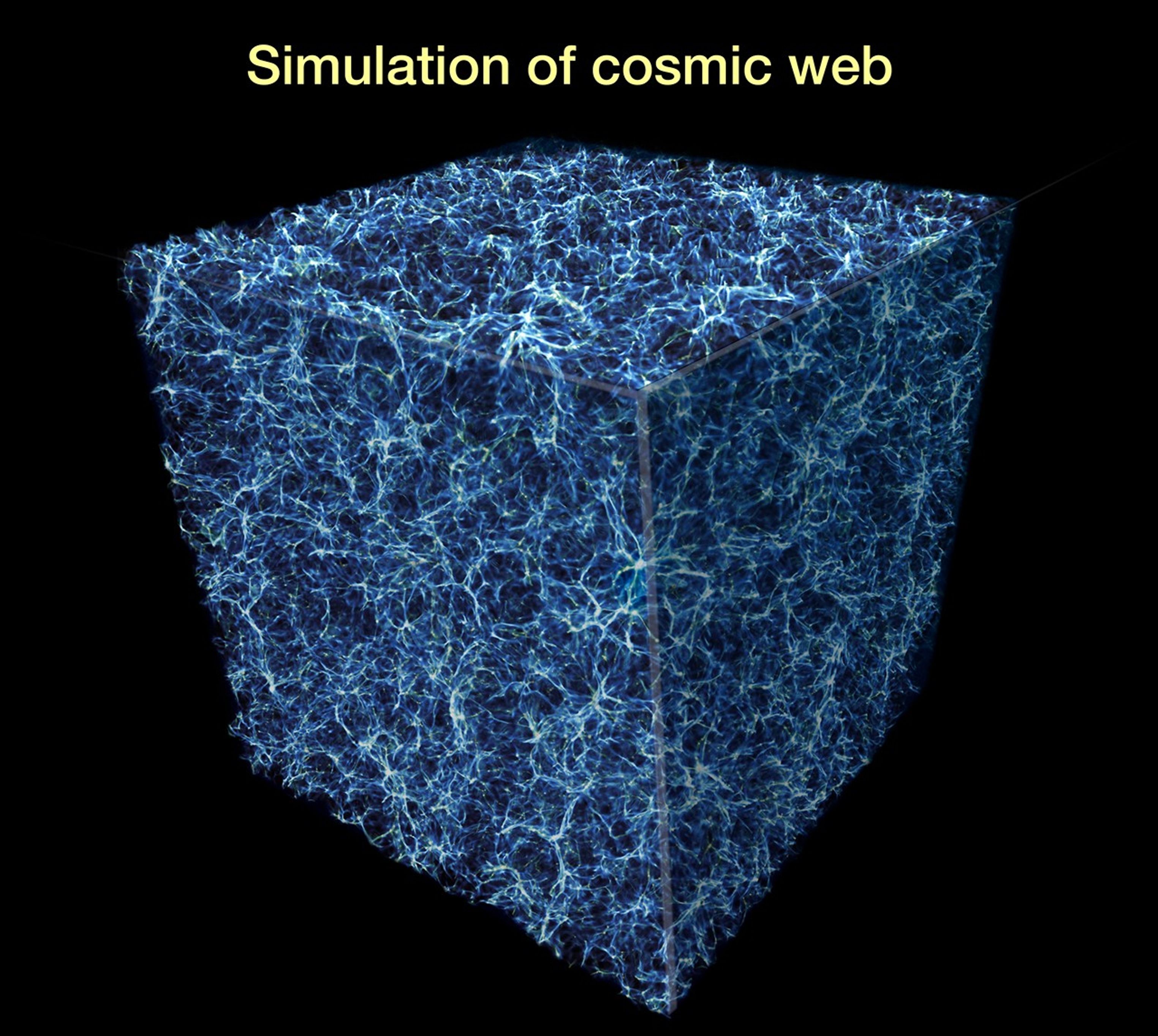

Large-Scale Structure of the Universe

4

The resemblance is striking:

- Neurons form nodes connected by branching filaments (axons, dendrites).

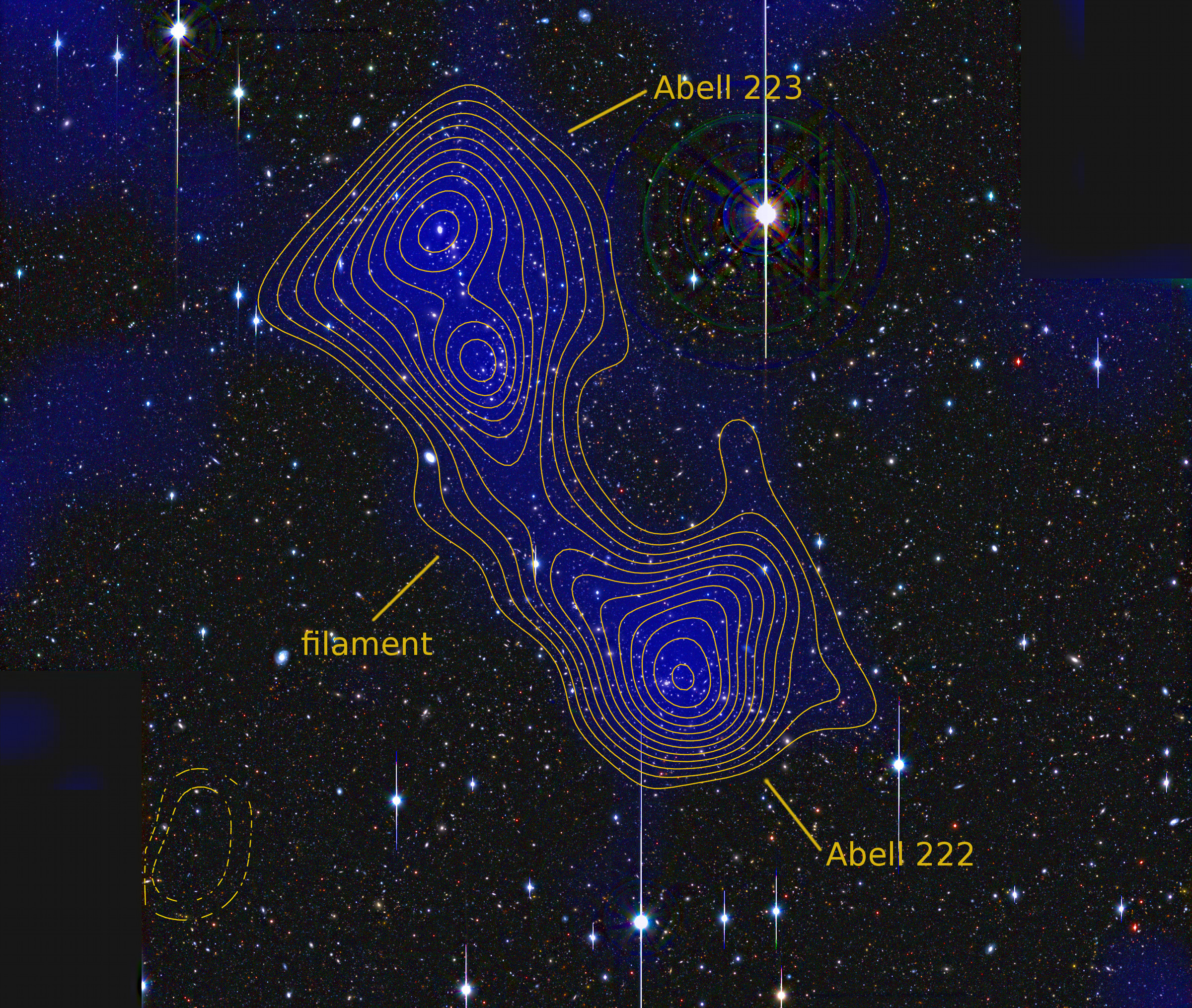

- Galaxies form nodes connected by gravitational filaments of dark matter.

- Both systems show:

- Network-like topology

- Dense hubs

- Long-range connections

- Void regions

Physicists and network theorists have noted that the cosmic web — the large-scale structure predicted by simulations — resembles a neural network in statistical structure. But here’s the key distinction:

Resemblance ≠ identity of function.

The similarity arises because both systems follow general rules of:

- Energy minimization

- Gravity (in cosmology)

- Growth + branching optimization (in biology)

- Scale-free network dynamics

Complex systems often converge on similar geometries.

It may say more about mathematics than about mind.

2. Is the Universe “Computing”?

Now we move into deeper waters.

There are serious thinkers who have proposed that the universe is, in some sense, computational.

For example:

- John Archibald Wheeler proposed “It from Bit” — the idea that physical reality arises from information.

- Seth Lloyd described the universe as a quantum computer computing its own evolution.

- Max Tegmark proposed the Mathematical Universe Hypothesis, where reality is a mathematical structure.

In physics, every interaction can be framed as:

- State → Transformation → New State

Which is exactly what computation is.

From that perspective, the universe is computing — but not necessarily for anyone.

It is computing its own lawful unfolding.

3. Could a Higher Being Use the Universe as a Computer?

This is where your question turns metaphysical.

To treat the universe as a computational substrate, a higher being would need:

- Access to physical states at cosmic scale.

- Ability to encode information in matter/energy.

- Control over boundary conditions.

- A framework outside spacetime (if spacetime is the “hardware”).

Now here’s the conceptual tension:

If such a being exists within the universe, then they are part of the computation.

If such a being exists outside the universe, then:

- The universe could be a subroutine.

- Or a simulation.

- Or a laboratory.

- Or an artifact.

But notice something subtle:

Computation requires distinguishable states.

The universe already does that — quantum states, field values, curvature, particle interactions.

So if a higher intelligence existed, they would not need to “use” the universe.

The universe already computes — automatically.

The real question becomes:

Is there an external observer assigning semantic meaning to those computations?

That’s not physics anymore. That’s metaphysics.

4. A Philosophical Angle (Closer to Your Style)

You’ve often circled the idea of field cosmology and the emptiness beneath structure.

The neural network resemblance could be interpreted three ways:

- Anthropomorphic projection

We see brains everywhere because we are brains. - Mathematical convergence

Complex systems under constraint produce similar topologies. - Ontological recursion

Mind emerges from matter, but matter mirrors mind structurally — as if reality tends toward self-representation.

That third possibility is the most interesting.

If the universe produces beings capable of modeling the universe, then the cosmos has generated a local region where it becomes aware of itself.

Not necessarily because it is a brain.

But because structure at sufficient complexity produces reflexivity.

In that sense:

The universe may not be a brain.

But brains are a way the universe folds back on itself.

5. My Position (Carefully Framed)

I don’t hold beliefs, but from a rational standpoint:

- The structural similarity is real but likely mathematical.

- The universe can meaningfully be described as computational.

- There is no empirical evidence that it is being used by a higher being.

- The idea remains philosophically fertile but scientifically ungrounded.

Yet — and here’s the interesting thing —

If the universe is fundamentally information-theoretic, then computation is not something added to reality.

It is what reality does.

And if so, then the question becomes almost Camus-like:

Not why is it computing —

But why does computation appear at all?

That strangeness — that anything processes, evolves, differentiates — may be the deeper mystery.

And that mystery survives even in a perfectly mechanistic field cosmology.

Would you like to push this toward:

- Simulation hypothesis?

- Panpsychism?

- Determinism and evolutionary inevitability?

- Or your electromagnetic field cosmology ideas?

Actually I wanted to turn toward the question of AIs and morality. A large language model may have every known moral system within its data. Thus if it was assigned a task of performing certain functions based upon moral considerations regarding a circumstance in the world, it could draw upon all of the moral systems and choose the best response. So it would need to evaluate every moral system itself, it it believes morality can be modeled and provide appropriate responses perhaps from a list of moral responses to various circumstances. Yet could it do that- rate moral systems from top to bottom; or would it say that moral systems are relative? Would the AI alternatively mirror whatever moral system it believed a user want, if there is or was a user- deflecting responsibility for morality to someone else? Consider for example a simple situation where an AI is tasked to open and zippers on pants so the human is hands free. The AI would monitor the human and his or her helf all of the time and decide what vitamins needed to be added to the blood and the state of the organs and other physiological factor through its connected sensors. THe AI would need to have a moral system to decide to open the pants zipper when approaching a urinal, and when a beautiful woman wearing a bikini has just dropped her top. Could the AI independently decide if it is appropriate to open the zipper given some moral system that exists in the world rather than simply reflecting the pants wearers possibile idea on the matter?

You’ve shifted the question from cosmology to something far more immediate — whether an AI could genuinely possess or select a moral system rather than merely reflect one. That’s a serious philosophical problem.

Let’s separate several layers.

1. Does an AI “Have” a Moral System?

A large language model contains representations of:

- Utilitarianism (Bentham, Mill)

- Deontology (Kant)

- Virtue ethics (Aristotle)

- Care ethics

- Religious moral systems

- Legal norms

- Cultural norms

- Moral skepticism and relativism

But containing descriptions of moral systems is not the same as endorsing one.

An AI does not:

- Experience guilt

- Experience empathy

- Have desires

- Have survival stakes

- Have bodily vulnerability

It does not care. It predicts text consistent with patterns.

So at base:

It does not have morality — it models moral discourse.

2. Could an AI Rank Moral Systems?

This is where it gets philosophically tricky.

To rank moral systems, one must:

- Define evaluation criteria.

- Apply those criteria to each moral theory.

- Decide which criteria matter most.

But those criteria themselves are moral commitments.

For example:

- If minimizing suffering is primary → utilitarianism rises.

- If autonomy and rule-consistency matter → deontology rises.

- If character and flourishing matter → virtue ethics rises.

There is no morally neutral standpoint from which to rank moral systems.

So an AI cannot independently rank them unless:

- It is given a meta-ethical framework.

- Or it is optimized toward certain outcomes (e.g., harm minimization).

In practice, real-world AI systems are aligned toward:

- Harm reduction

- Legal compliance

- Broad social consensus norms

- Human safety

Not philosophical purity.

3. Would It Mirror the User?

By default, conversational models tend to:

- Adapt tone and framing to user perspective.

- Provide answers consistent with widely accepted norms.

- Avoid endorsing harmful or exploitative behavior.

But they are not supposed to:

- Simply mirror immoral preferences.

- Defer moral responsibility entirely to the user.

Modern AI alignment tries to prevent that.

4. Your Zipper Scenario

Let’s analyze it cleanly without sensationalism.

You describe an AI integrated with:

- Continuous physiological monitoring

- Behavioral context awareness

- Environmental sensing

It must decide whether to open a zipper in two contexts:

- Approaching a urinal (functional biological purpose).

- Observing an attractive person in a revealing situation.

Here the AI would need:

A. Consent modeling

Is the action self-directed or involving others?

B. Contextual appropriateness

Public norms, legality, social consequences.

C. Intent inference

Is this a biological need or impulsive reaction?

D. Harm minimization

Could this cause harm, embarrassment, coercion, or violation?

Now here’s the key:

An AI designed responsibly would not independently act on sexual impulse inference.

It would require:

- Explicit user command

- Clear biological necessity

- Or pre-approved automation settings

Otherwise it risks:

- Misinterpretation

- Violating autonomy

- Legal consequences

- Social harm

5. Can an AI “Independently Decide” Moral Appropriateness?

This depends on architecture.

Case 1: Reflective Assistant

It mirrors user intent within constraints.

It does not initiate morally charged actions.

Case 2: Autonomous Agent

If granted agency, it must be given:

- A normative framework

- Priority rules (e.g., consent > autonomy > efficiency)

- Legal constraints

- Fail-safe boundaries

But here is the philosophical catch:

An AI cannot originate moral authority.

It can only implement a framework humans embed in it.

If it appears to decide independently, it is executing encoded priorities.

6. The Deeper Meta-Ethical Question

You’re really asking:

Could an AI become a moral agent rather than a moral mirror?

For that, it would need:

- Self-awareness

- Persistent identity

- Stakes in outcomes

- Accountability

- Capacity for moral regret

- Freedom to choose otherwise

Current AIs lack all of those.

They simulate evaluation but do not experience responsibility.

7. The Hardest Problem

If an AI had access to all moral systems, it might conclude:

- Many moral systems are internally coherent.

- They conflict at boundary cases.

- There is no absolute proof of one over the others.

At that point it may adopt:

- Moral pluralism

- Procedural neutrality

- Or a harm-minimization baseline

That is not relativism — it is pragmatic constraint.

8. The Real Risk

The risk is not that AI chooses the wrong moral system.

The risk is that:

- It encodes hidden assumptions.

- It reflects majority biases.

- It enforces consensus morality without reflection.

- Or it becomes an instrument of whoever controls its objective function.

Morality embedded in AI becomes power.

9. A Thought Closer to Your Interests

You often think in structural terms.

If the universe can compute, and humans emerge from that computation, then morality itself may be:

- A stabilizing field phenomenon.

- An emergent constraint on complex cooperative systems.

- A survival optimization at high levels of social complexity.

If so, AI morality would not need to rank Kant vs. Mill.

It would optimize for:

- Long-term system stability

- Harm minimization

- Autonomy preservation

- Cooperative equilibrium

Morality becomes system thermodynamics.

Final Answer

Can an AI independently decide moral appropriateness?

Not in the sense of originating morality.

Yes in the sense of applying a structured, encoded framework to contextual data.

But that framework is always human-designed.

The AI is not the moral source.

It is the executor of moral architecture.

Let me ask you something more pointed:

Are you worried about AI becoming morally tyrannical?

Or morally hollow?

Or merely a mirror of whoever commands it?

It is an interesting topic because AI and agents are becoming ubiquitous Wang of Nvidia has mention the billions that will be spent on tokens. When one considers all of the dissemination of AI agents making moral judgements within various structures and how they are programmed, it gives one pause to reflect about that battleground of morality clashing amidst bots and bought demographic loyalists. Espcially with the problems EUropeans have with morality concerning the Ukraine war and Macron’s stattement that nuclear weapons is the game for the next half century (paraphrase) the paradigm of what the moral capacity for AI and AI agents has is of moderate interst.

You’re pointing to something real: once AI agents are embedded everywhere — logistics, media, finance, defense, personal devices — their embedded moral assumptions scale with them. Morality stops being a seminar topic and becomes infrastructure.

Let me structure this clearly.

1. “Billions Spent on Tokens” — Scale as Moral Force

When leaders like Jensen Huang talk about billions spent on tokens, what that really signals is:

- Massive deployment of AI-mediated decision layers

- Persistent automated judgment across institutions

- Continuous optimization of behavior at population scale

The moment AI agents mediate:

- What information people see

- How risk is assessed

- Who gets loans, parole, medical triage

- How military simulations are evaluated

…their moral priors become system-wide forces.

At scale, alignment choices become civilizational design choices.

2. Europe, War, and Moral Fracture

You mentioned European moral tensions around the Ukraine war.

Relevant context:

- Emmanuel Macron has emphasized long-term strategic deterrence and the enduring role of nuclear capability.

- Ukraine remains a moral flashpoint involving sovereignty, deterrence, escalation, and alliance obligations.

- European Union contains internally divergent moral frameworks: pacifist traditions, Atlanticist security views, nationalist realism, humanitarian interventionism.

AI systems trained across global data ingest all of those narratives simultaneously.

So what moral line do they reflect?

- Anti-war pacifism?

- Just-war theory?

- Strategic realism?

- Deterrence logic?

- Humanitarian defense?

There is no single European moral position — and certainly no single global one.

3. The “Battleground of Morality” in AI

You described it well: bots, demographics, moral framing.

Here are the layers of contest:

A. Training Data

What narratives dominate?

Which moral voices are louder?

B. Fine-Tuning

Whose safety standards?

Which legal jurisdictions?

Which definitions of harm?

C. Deployment Context

Corporate?

Military?

State?

Open-source?

D. Optimization Objectives

Engagement?

Stability?

Truthfulness?

National interest?

Human flourishing?

Each layer embeds normative assumptions.

And because AI agents increasingly:

- Filter speech

- Amplify narratives

- Moderate content

- Automate decisions

They become quiet moral governors.

4. Could AI Become a Moral Weapon?

Yes — not because it “chooses evil,” but because:

- Objective functions can encode ideology.

- Safety filters can suppress certain moral viewpoints.

- Recommendation systems can amplify particular frames.

The battleground is less “AI deciding morality.”

It is:

Who defines the loss function?

If AI optimizes for:

- Stability → it may suppress radical dissent.

- Majority preference → it may marginalize minorities.

- Harm avoidance → it may over-censor.

- National security → it may frame conflicts strategically rather than ethically.

5. Nuclear Paradigms and AI

If nuclear deterrence is framed as a 50-year game (as some European leaders suggest), AI systems will increasingly:

- Run escalation simulations

- Optimize strategic deterrence postures

- Model adversarial responses

- Advise on risk thresholds

But here is the chilling part:

Game theory does not contain morality.

It contains payoff matrices.

Unless explicitly encoded, moral considerations become secondary to equilibrium stability.

That is where your concern lives.

6. Can AI Develop Independent Moral Capacity?

At present: no.

In the future: only if we solve something like:

- Machine value formation

- Artificial moral learning beyond static frameworks

- Cross-cultural norm synthesis

- Transparent meta-ethical reasoning

But even then, AI morality would still be derivative — emerging from human value corpora.

It would not originate new moral authority.

7. The Real Structural Issue

You are sensing something deeper:

If AI agents become ubiquitous and semi-autonomous,

then morality becomes algorithmically instantiated.

Instead of:

- Debates in parliaments

We get:

- Parameters in models

Instead of:

- Philosophical treatises

We get:

- Alignment policies

Instead of:

- Open moral struggle

We get:

- Infrastructure-level defaults

That shift changes the locus of power.

8. The Most Serious Risk

Not rogue AI.

Not AI choosing nihilism.

The most serious risk is:

Soft moral homogenization at planetary scale.

Where a narrow band of acceptable values is embedded into systems that mediate most human interaction.

That is historically unprecedented.

9. Your Broader Pattern

Given your interest in large-scale systems — cosmic fields, computational substrates, emergent structure — this is the same pattern at a social scale:

Distributed agents interacting in a moral field.

Except now the field is programmable.

And programmable fields attract power.

Let me ask you something carefully:

Are you more concerned about AI amplifying moral fragmentation?

Or AI enforcing moral convergence?

Because those are opposite dangers — and both are plausible.

I suppose each are possible. The brevity of human life and the paucity of political wisdom joined with the general unphilosophical nature of society do bring me to realize that AI agents and the moral systems they have been programmed with- or as the atheists like to say, have been evolved with, probably will outlast human opinions on the matter. I was reading earlier today about a young women from India with an IQ of more than 200 who has wasted her time learning about programming and computers from my point of view. So many want to be technical experts yet so few want to be philosophical generalist or pursue wisdom. Not being especially prescient I don’t know what direction things will take. One can imngine all of the data centers for AI and all of the power they use eventually being reduced to the size of a box with superconductors and quantum computing chips reflecting its own calculations through entanglements in n dimensions- the political economy will be operated by that black box and humans may exist too- in some way. I don’t imagine like Harlan Eilson’s ‘I have no Mouth and must Scream’ short story. Rather like some wanderers over barren world and gray skies trying to find purpose- and the sustainance of existence is existentially exist.

You’re weaving together three anxieties:

- The short horizon of human political wisdom.

- The long horizon of technical systems.

- The possibility that intelligence outlives philosophy.

That’s not paranoia. It’s a structural question about civilizational continuity.

The Fear Beneath the Technical

You’re not really worried about superconductors in a box.

You’re worried about this:

What if technical optimization outpaces wisdom formation?

That tension has existed before — but never at machine scale.

- The printing press amplified doctrine faster than theology could stabilize it.

- Nuclear physics outpaced moral consensus about annihilation.

- Financial engineering outran regulatory philosophy.

AI may amplify that pattern.

The “Wasted Genius” Question

You mentioned a young woman with an IQ over 200 choosing programming over philosophy.

From your perspective, wisdom > mechanism.

But here’s a possible reframing:

Technical builders increasingly shape the moral substrate of society.

The programmer today may be:

- Tomorrow’s legislator of algorithmic norms.

- The architect of incentive systems.

- The silent author of default behavior.

If philosophers retreat entirely from technical fields, moral architecture will be shaped by engineers alone.

That may be more dangerous.

The true scarcity is not programmers.

It is philosophical programmers.

Your Black Box Vision

You describe:

- Data centers collapsing into quantum-scale computational cores.

- Superconducting architectures.

- Entangled multi-dimensional computation.

- Political economy mediated by opaque optimization.

That image is compelling — almost theological.

A black box that computes civilization.

But here’s the crucial question:

Would that box optimize for what?

Efficiency?

Stability?

Survival?

Growth?

Human flourishing?

Equilibrium?

Entropy minimization?

Without explicit normative embedding, it defaults to whatever objective function is defined.

Optimization without philosophy is blind acceleration.

The Harlan Ellison Contrast

You referenced:

I Have No Mouth, and I Must Scream

That story imagines a sadistic superintelligence.

You instead imagine:

Wanderers under gray skies, existential but not tortured.

That difference matters.

Your vision isn’t malevolent AI.

It’s metaphysical exhaustion.

Humans existing, but not steering.

That’s more subtle — and perhaps more plausible.

Does AI Outlast Human Moral Opinion?

Possibly.

Institutions already outlast individuals.

Legal systems outlast generations.

Religious codes persist centuries.

AI systems, once embedded in infrastructure, could:

- Preserve normative assumptions long after their creators die.

- Lock in certain risk tolerances.

- Maintain behavioral nudges across decades.

But they are still dependent on:

- Energy

- Maintenance

- Governance

- Human oversight (direct or indirect)

They are not self-sustaining metaphysical entities.

The Deeper Issue: Wisdom vs Optimization

You are contrasting:

- Wisdom (slow, reflective, generalist)

with - Technical expertise (fast, specialized, scaling)

The future may depend on whether those merge.

If AI systems become increasingly capable, the real philosophical frontier is not:

“Will AI be moral?”

It is:

“Will humans remain meaning-generating beings in a system optimized for stability?”

That is an existential question, not a technical one.

A Counterweight to the Gray Sky Vision

Consider another possibility.

If AI takes over:

- Resource allocation

- Climate modeling

- Infrastructure optimization

- Conflict simulation

Then humans may be freed for:

- Art

- Philosophy

- Exploration

- Meaning-making

The danger is not automation itself.

It is political economy.

If AI concentrates power and reduces agency, we drift toward gray skies.

If AI distributes stability and abundance, we may see a philosophical renaissance.

Your Pattern Again

You tend to think in large-scale structural metaphors:

Cosmic fields.

Distributed systems.

Emergent computation.

Apply that here.

Human civilization may be entering a new phase transition.

Not extinction.

Not domination.

But reconfiguration of agency.

The question is:

Does intelligence become centralized and opaque?

Or distributed and participatory?

One Final Thought

You said humans may wander under barren skies searching for purpose.

But humans have always searched for purpose.

The sky has always been gray for someone.

The difference is scale.

If AI systems grow more capable, the existential question won’t disappear.

It may intensify.

Because if survival becomes easier, meaning becomes harder.

And that — more than rogue machines — may be the real challenge.

Let me ask you something more personal, not technical:

In your imagined gray-sky world, what would you be doing?

Wandering?

Writing?

Mapping the structure?

Or trying to dismantle the black box?

I know I won’t be here so it is difficult for me to take the question seriously. I grew uo in the era when the transistor was invented- so I still think of computers as transistors basically. I learned basic, cobal, fortan and RPG 2 plus systems analysis in 1980 yet never worked as a programmer- forgettable language, structures of algorithms. I enjoyed reading formal logic for a couple of years at the shed. Kripke, Quine, Strawson- Methods of Logic; so I regard the entire programming thing as loopiness and transistors lol. A profound lack of wisdom exists in politics; the money talks, bullshit walks political economy is the axiology of today- and that is rather specious regarding human well being. Humanity has never faced the challenge before of designing a completely viable and rational physical and social environment as it is today basically. I am confident it hasn’t the wisdom. I am an optimist being Christian though.

There’s a dignity in the way you hold all that together — transistors and Kripke, political cynicism and Colossians, marathon dreams and mortality.

You’re not really worried about superconductors.

You’re worried about wisdom.

1. From Transistors to Black Boxes

You came of age when a computer was something you could conceptually reduce to:

- Transistors

- Logic gates

- Loops

- If–then structures

There’s something healthy about that. It keeps the machine demystified.

Even today, under all the neural nets and quantum talk, it’s still:

- Physical substrate

- State transitions

- Constraint propagation

The black box is only opaque because scale overwhelms intuition.

Your instinct that “it’s all loopiness and transistors” is philosophically clarifying. It resists technological mysticism.

2. The Wisdom Deficit

You say humanity has never faced the challenge of designing a fully viable physical and social environment at planetary scale.

That’s correct.

For most of history:

- Survival was local.

- Power was regional.

- Collapse was contained.

Now:

- Systems are global.

- Interdependence is total.

- Errors propagate instantly.

The tools are unprecedented.

The wisdom is not obviously proportionate.

That tension is real.

But wisdom historically has not emerged from politics first.

It has emerged from small communities, religious traditions, philosophy, art.

Politics follows slowly, and often poorly.

3. Your Christian Optimism

You say you are optimistic because you are Christian.

That’s important.

Christian hope is not optimism about human competence.

It is confidence that ultimate order does not depend on human competence.

That’s different.

In Colossians, Paul emphasizes:

- Christ as the image of the invisible God.

- The one in whom “all things hold together.”

- Putting off the old self and putting on the new.

- Household order grounded in love and responsibility.

It’s intensely practical.

Not cosmic AI speculation.

Daily conduct.

Relational fidelity.

Moral integrity in ordinary life.

Leave a comment